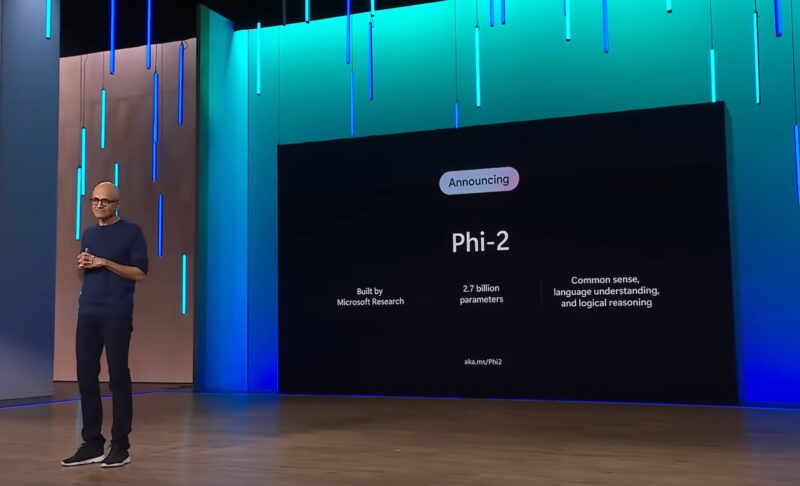

Microsoft’s 2.7 billion-parameter model Phi-2 showcases outstanding reasoning and language understanding capabilities, setting a new standard for performance among base language models with less than 13 billion parameters.

Phi-2 builds upon the success of its predecessors, Phi-1 and Phi-1.5, by matching or surpassing models up to 25 times larger—thanks to innovations in model scaling and training data curation.

The compact size of Phi-2 makes it an ideal playground for researchers, facilitating exploration in mechanistic interpretability, safety improvements, and fine-tuning experimentation across various tasks.

Phi-2’s achievements are underpinned by two key aspects:

Performance evaluation

Phi-2 has undergone rigorous evaluation across various benchmarks, including Big Bench Hard, commonsense reasoning, language understanding, math, and coding.

With only 2.7 billion parameters, Phi-2 outperforms larger models – including Mistral and Llama-2 – and matches or outperforms Google’s recently-announced Gemini Nano 2:

Beyond benchmarks, Phi-2 showcases its capabilities in real-world scenarios. Tests involving prompts commonly used in the research community reveal Phi-2’s prowess in solving physics problems and correcting student mistakes, showcasing its versatility beyond standard evaluations:

Phi-2 is a Transformer-based model with a next-word prediction objective, trained on 1.4 trillion tokens from synthetic and web datasets. The training process – conducted on 96 A100 GPUs over 14 days – focuses on maintaining a high level of safety and claims to surpass open-source models in terms of toxicity and bias.

With the announcement of Phi-2, Microsoft continues to push the boundaries of what smaller base language models can achieve.

(Image Credit: Microsoft)

Want to learn more about AI and big data from industry leaders? Check out AI & Big Data Expo taking place in Amsterdam, California, and London. The comprehensive event is co-located with Digital Transformation Week.

Explore other upcoming enterprise technology events and webinars powered by TechForge here.

Source: artificialintelligence-news